One measure of a research program's vitality is its ability to reach beyond its home discipline and make genuine contributions to problems in other fields. Throughout my career, the structured light 3D imaging, computational sensing, and machine vision tools developed in my laboratory have been applied to a remarkably diverse set of real-world problems — from detecting snipers on a battlefield to scoring the body condition of dairy cows, from measuring pediatric vocal fold motion to phenotyping plant root systems. These applied collaborations are not peripheral to my core research; they are among its most rewarding expressions, and they have consistently generated new technical challenges that have fed back into the fundamental research program.

This page describes the major applied machine vision collaborations that have grown from my laboratory's core expertise in structured light 3D imaging, depth sensing, and computational imaging. Each project is described with its technical context, the specific machine vision problem it addresses, the funding that supported it, and the publications that resulted.

Anti-Sniper Detection: Mid-Infrared Bullet Tracking (2004–2010)

The problem. A sniper firing from a concealed position is one of the most dangerous threats faced by military and law enforcement personnel in urban environments. Acoustic gunshot detection systems can localize the muzzle blast but are confused by echoes and by suppressed weapons. An alternative approach is to detect the bullet itself in flight and back-project its trajectory to the shooter's position — but a supersonic bullet is small, fast, and produces only a faint thermal signature. Our approach. Beginning in 2004, I led the University of Kentucky's component of a multi-institution program funded by the U.S. Marine Corps and later the U.S. Air Force, in partnership with M2 Technologies, CABEM Technologies, and Lockheed Martin. The program applied mid-infrared (mid-IR) camera technology — sensitive to wavelengths between 3 and 5 microns where aerodynamic heating of a supersonic projectile produces a detectable thermal signature — to bullet-in-flight tracking and sniper localization. Technical approach. The core machine vision challenge was detecting a small, fast-moving thermal target against a cluttered background in real time. A supersonic bullet travels at roughly 900 meters per second, crossing the field of view of a mid-IR camera in a few milliseconds. The detection algorithm had to distinguish the bullet's thermal signature from background clutter — warm surfaces, atmospheric turbulence, birds, vehicles — while operating fast enough to track the bullet across multiple frames before it exited the field of view. Once detected and tracked across multiple camera frames, the bullet's 3D trajectory was recovered by triangulation from multiple calibrated camera positions, and the trajectory was back-projected to estimate the shooter's position. Program outcomes. Conducted in three phases from 2004 to 2010, the program produced a portable, ruggedized anti-sniper detection system capable of detecting bullets in flight at ranges exceeding 200 meters. The University of Kentucky's total funding exceeded $2M within a program that eclipsed $10M across all partners. The final systems were delivered to the U.S. Air Force.

Royce Mohan with the automated vascular patterning assay system (NIH, 2005–2011).

High-Throughput Drug Discovery Screening (2005–2011)

Royce Mohan with the automated vascular patterning assay system (NIH, 2005–2011).

High-Throughput Drug Discovery Screening (2005–2011)

The problem. Drug discovery pipelines rely on high-throughput screening (HTS) — the automated testing of thousands to millions of chemical compounds against a biological target to identify candidates for further development. A critical step in many HTS assays is the quantitative analysis of microscopy images: counting cells, measuring their morphology, detecting fluorescent markers, and classifying their responses to candidate compounds. Automating this analysis with sufficient accuracy and speed to keep pace with modern HTS robots is a significant machine vision challenge. Our approach. In collaboration with Professor Royce Mohan at the University of Connecticut Health Center (formerly at the University of Kentucky), my group developed machine vision algorithms for automated analysis of vascular patterning assays — biological assays that measure the ability of candidate compounds to inhibit or promote the growth of blood vessels, which is relevant to cancer treatment. The assays produce microscopy images of endothelial cell networks, and the machine vision system had to segment, measure, and classify these networks automatically and reproducibly. Technical contributions. The key machine vision challenges were:

The problem. Accurate measurement of vocal fold vibration is essential for diagnosing and treating voice disorders in children. Standard clinical assessment uses stroboscopic video laryngoscopy — a camera inserted into the throat that captures slow-motion video of the vocal folds vibrating during phonation. However, standard video provides only a 2D view of the vocal fold surface and cannot directly measure the 3D displacement, velocity, or surface deformation of the folds during vibration. Our approach. In collaboration with Professor Ronald Patel in the University of Kentucky's Department of Communication Sciences and Disorders and Professor Kevin Donohue in ECE, we applied structured light laser projection to measure the 3D surface motion of pediatric vocal folds in vivo. A narrow laser line was projected across the vocal fold surface during laryngoscopy, and the deformation of the reflected laser line was analyzed frame by frame to recover the 3D surface profile as a function of time during the vibration cycle. This provided quantitative 3D kinematic data — displacement amplitude, asymmetry, mucosal wave velocity — that is not accessible from standard 2D video:

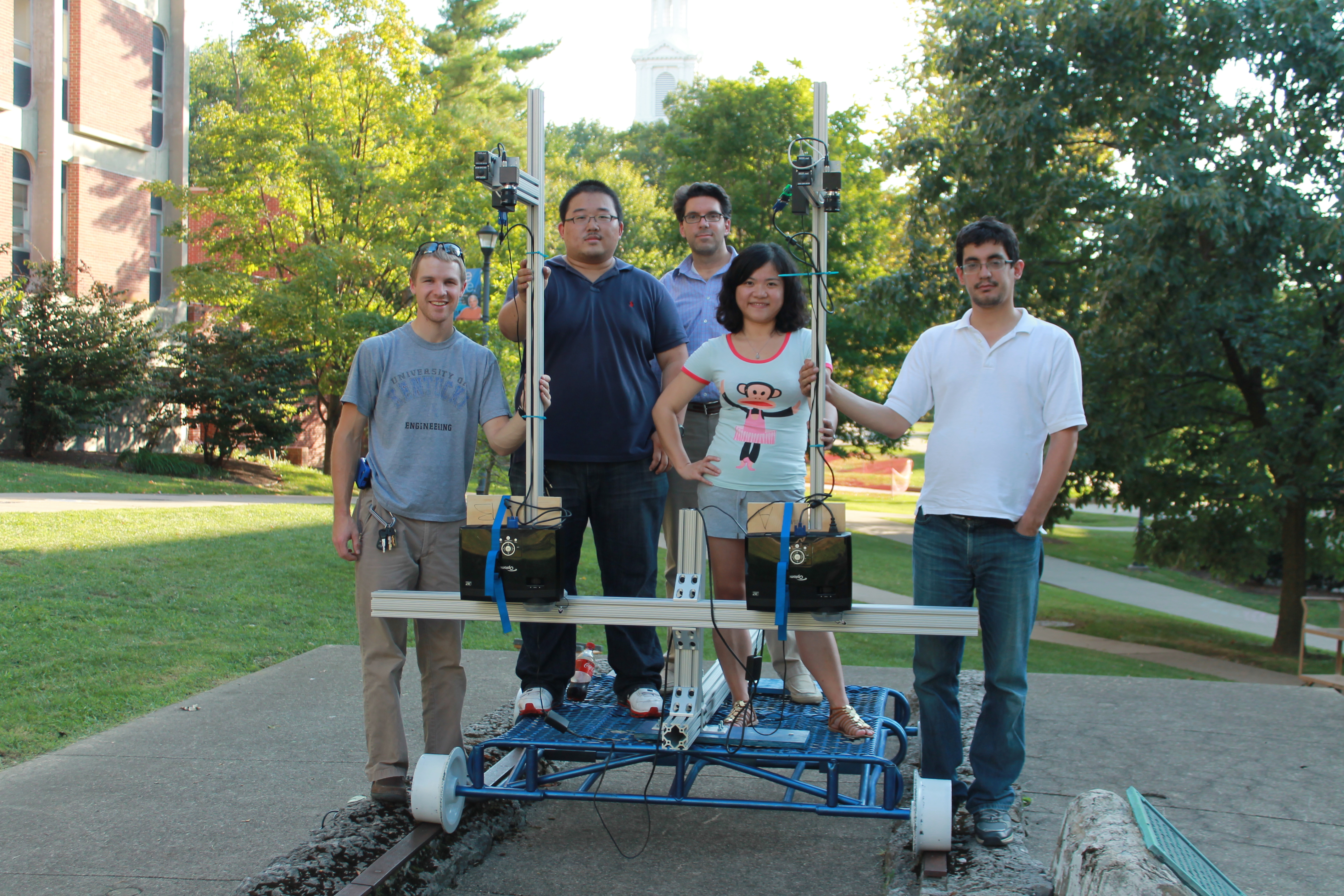

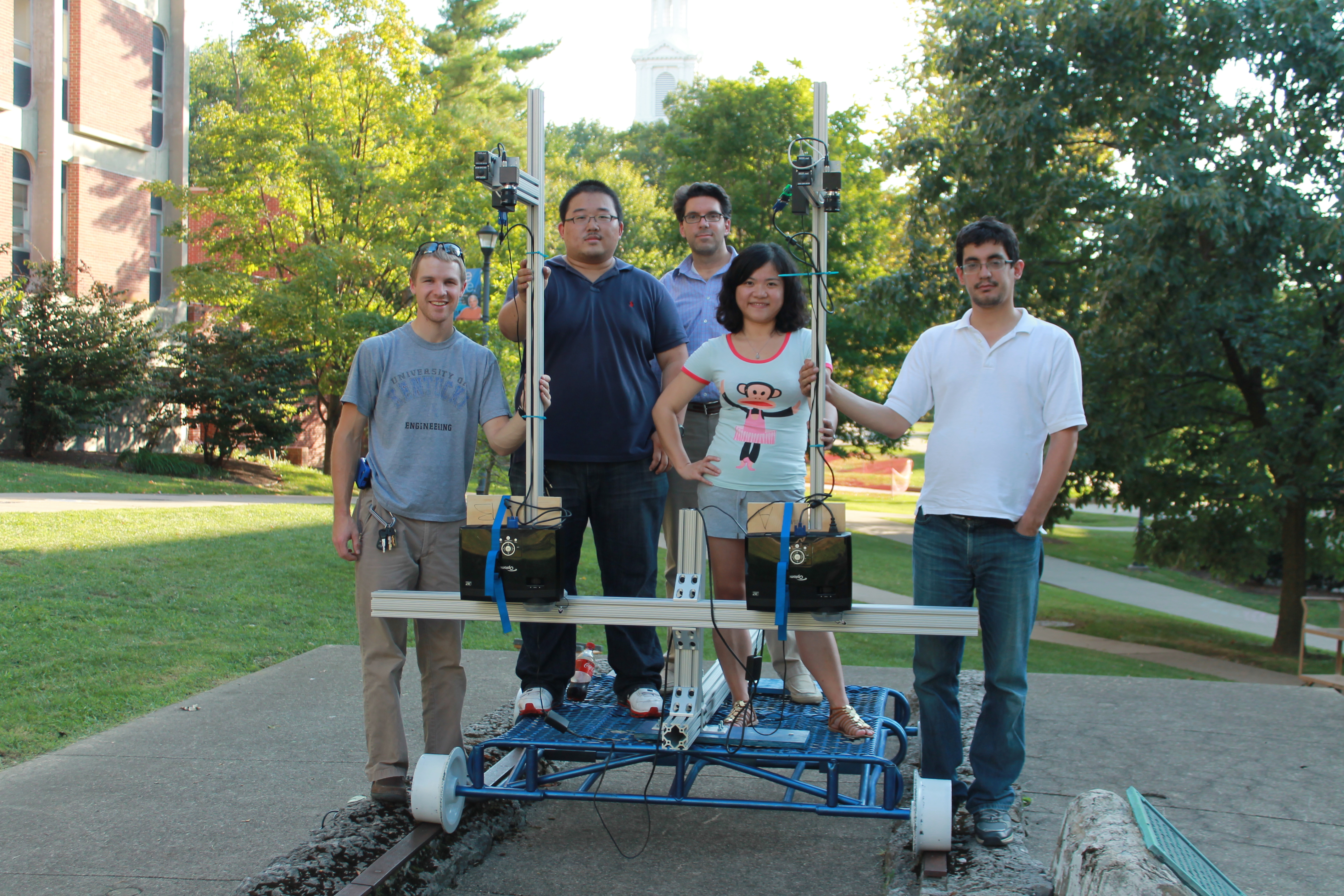

Student engineering team with the dual-projector structured light scanner for railroad track crossing assessment.

The problem. Rail-highway grade crossings — the intersections where roads cross railroad tracks — are a significant source of vehicle damage and safety hazards when the crossing surface deteriorates. Rough crossings cause vehicle suspension damage, cargo shifting, and driver discomfort, and identifying crossings in need of rehabilitation requires quantitative measurement of crossing roughness. Current practice relies largely on visual inspection, which is subjective and inconsistent. A quantitative, sensor-based roughness metric would enable prioritized, data-driven maintenance scheduling.

Our approach. In collaboration with Professor Reginald Souleyrette in the University of Kentucky's Department of Civil Engineering, and funded through the National University Rail (NURail) Center at the University of Illinois, we developed a 3D scanning approach to quantitative rail crossing roughness measurement. A structured light or laser scanning system mounted on a vehicle could capture the 3D surface profile of a crossing at highway speed, and the resulting point cloud could be analyzed to extract roughness metrics — surface height variance, slope discontinuities, settlement profiles — that correlate with the mechanical loads experienced by crossing vehicles.

Student engineering team with the dual-projector structured light scanner for railroad track crossing assessment.

The problem. Rail-highway grade crossings — the intersections where roads cross railroad tracks — are a significant source of vehicle damage and safety hazards when the crossing surface deteriorates. Rough crossings cause vehicle suspension damage, cargo shifting, and driver discomfort, and identifying crossings in need of rehabilitation requires quantitative measurement of crossing roughness. Current practice relies largely on visual inspection, which is subjective and inconsistent. A quantitative, sensor-based roughness metric would enable prioritized, data-driven maintenance scheduling.

Our approach. In collaboration with Professor Reginald Souleyrette in the University of Kentucky's Department of Civil Engineering, and funded through the National University Rail (NURail) Center at the University of Illinois, we developed a 3D scanning approach to quantitative rail crossing roughness measurement. A structured light or laser scanning system mounted on a vehicle could capture the 3D surface profile of a crossing at highway speed, and the resulting point cloud could be analyzed to extract roughness metrics — surface height variance, slope discontinuities, settlement profiles — that correlate with the mechanical loads experienced by crossing vehicles.

Machine vision system monitoring dairy cows with a network of TOF cameras at the parlor exit.

Precision Dairy Farming: Body Condition Scoring and Feed Intake (2012–2020)

Machine vision system monitoring dairy cows with a network of TOF cameras at the parlor exit.

Precision Dairy Farming: Body Condition Scoring and Feed Intake (2012–2020)

The problem. In modern commercial dairy farming, individual cow health and productivity are strongly influenced by body condition — a measure of fat reserves analogous to a body mass index for cattle. Body condition scoring (BCS) is a standard practice in which a trained technician assigns each cow a score from 1 (emaciated) to 5 (obese) based on visual and tactile assessment of specific anatomical landmarks. BCS is a strong predictor of reproductive performance, milk yield, and disease risk. However, manual BCS is labor-intensive, subjective, and inconsistent across scorers — a farm with hundreds of cows cannot practically score every animal every day. Our approach. In collaboration with Professor Jeffrey Bewley in the University of Kentucky's Department of Animal and Food Sciences, and funded by the Kentucky Science and Technology Company, we developed an automated BCS system based on depth camera imaging. A depth camera mounted above the milking parlor exit captures a 3D point cloud of each cow's dorsal surface as she walks through. Machine vision algorithms segment the cow from the background, extract the anatomical landmarks used in manual BCS — the spine, pin bones, hook bones, and tail head — and compute a predicted BCS from the 3D geometry of these landmarks. The system processes each cow automatically as she passes through the milking parlor, requiring no additional labor and producing consistent, reproducible scores that can be tracked over time to detect trends in herd health. This work was extended to automated feed intake measurement by M.S. student Anthony Shelly:

Senior design team with the self-contained body condition scoring system at the UK dairy.

Plant Root Phenotyping (2020–2022)

Senior design team with the self-contained body condition scoring system at the UK dairy.

Plant Root Phenotyping (2020–2022)

The problem. Root architecture — the spatial distribution, depth, branching pattern, and volume of a plant's root system — is a critical but poorly understood determinant of crop performance under stress. Roots that reach deeper into the soil profile can access water and nutrients unavailable to shallow-rooted plants, making root architecture a target for breeding programs focused on drought tolerance and nitrogen use efficiency. However, measuring root architecture in large plants is technically difficult: roots are underground, extraction damages them, and manual measurement is prohibitively slow for the large sample sizes needed in breeding programs. Our approach. In collaboration with faculty in the University of Kentucky's College of Agriculture, we developed a machine vision pipeline for non-destructive 3D phenotyping of root systems in large plants. Excavated root crowns were imaged using structured light and depth sensing to produce accurate 3D point clouds of the root architecture. Custom algorithms segmented individual roots from the point cloud, measured root angles, diameters, and lengths, and computed summary statistics relevant to agronomic performance:

The problem. Facial asymmetry — differences in the size, shape, or position of facial structures between the left and right sides — is clinically significant in orthodontics, oral surgery, and reconstructive procedures. Quantitative measurement of facial asymmetry traditionally relies on 2D cephalometric radiographs, which cannot capture the full 3D geometry of the face and skull. 3D facial scanning using structured light or stereophotogrammetry provides a complete surface model of the face, but automated analysis of this data for asymmetry assessment requires robust algorithms for landmark detection, surface registration, and asymmetry quantification. Our approach. In collaboration with clinical faculty in the University of Kentucky's College of Dentistry, we developed an AI-powered pipeline for precision assessment of facial asymmetry from 3D facial scans, combining deep learning landmark detection with 3D surface analysis:

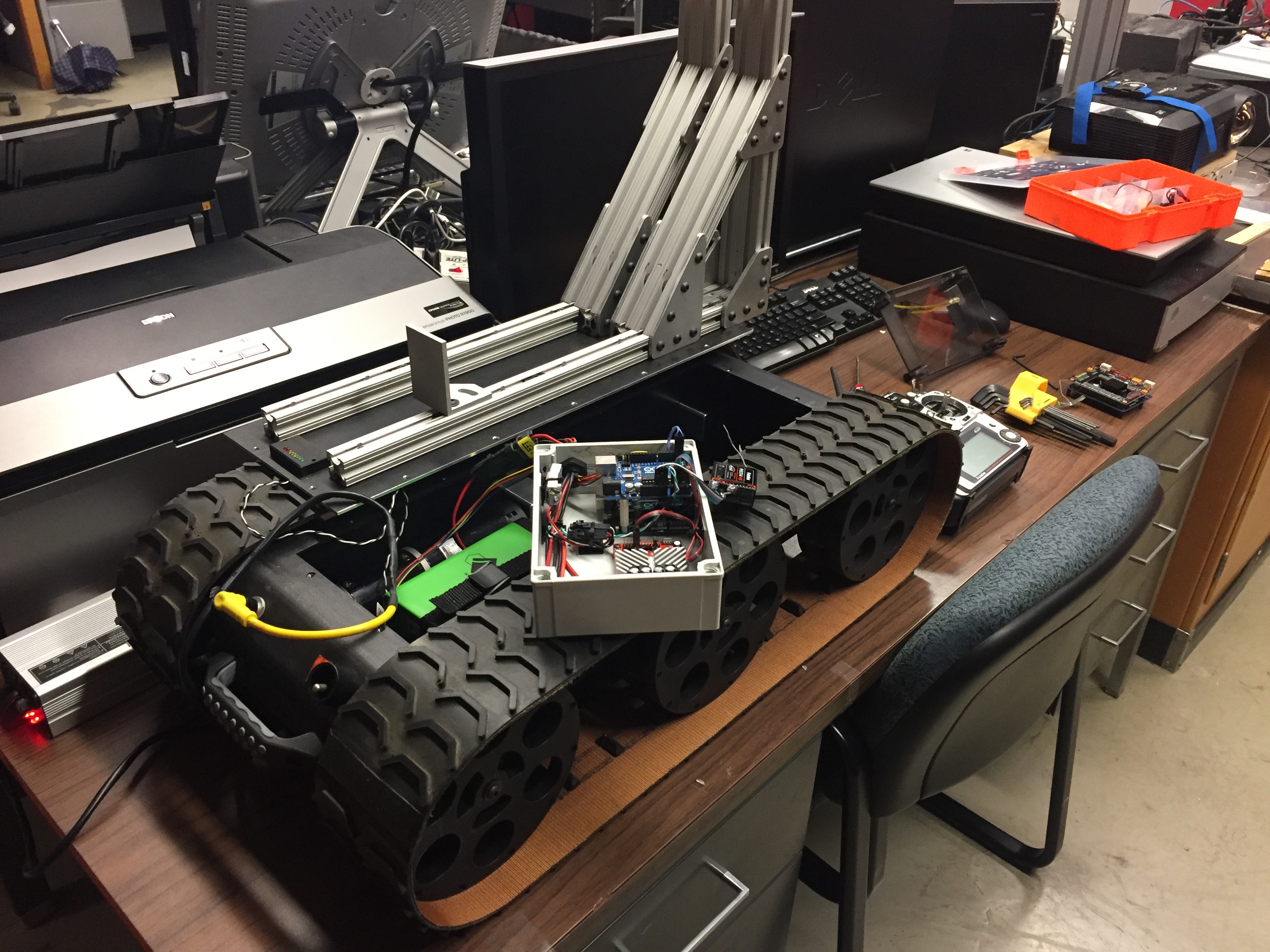

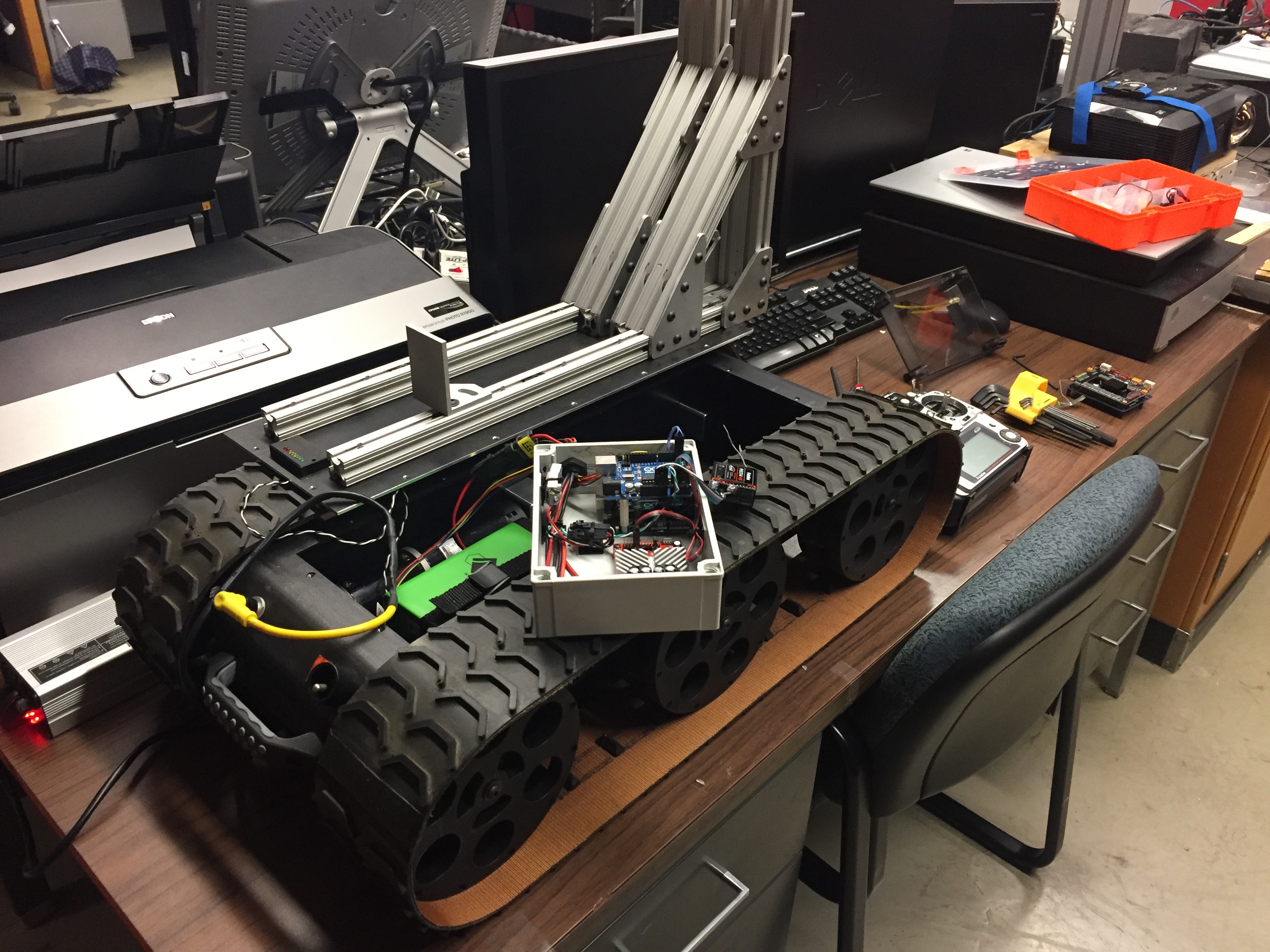

An autonomous robot being equipped with a 3D imaging system, 2016.

Contactless Tire Inspection (2023–Present)

An autonomous robot being equipped with a 3D imaging system, 2016.

Contactless Tire Inspection (2023–Present)

The problem. Tire condition — tread depth, sidewall damage, and structural integrity — is a critical safety parameter for vehicles ranging from passenger cars to commercial trucks and aircraft. Current tire inspection is largely manual and subjective, relying on visual assessment by a technician and periodic depth gauge measurements at discrete points on the tread surface. This approach is slow, inconsistent across inspectors, and unable to detect subtle subsurface damage or distributed wear patterns that develop across the full tire surface. A contactless, automated 3D inspection system could provide consistent, quantitative tire condition assessment at vehicle speeds, enabling continuous fleet monitoring without disrupting operations. Our approach. This work grows directly from the structured light 3D measurement technology developed in Thrust 1, applied to the specific geometric and operational constraints of tire inspection. A structured light sensor mounted at a service bay or roadway checkpoint captures the full 3D surface geometry of a tire as the vehicle passes over it at low speed. The resulting point cloud is analyzed to extract tread depth maps across the full tire width, detect localized damage features such as cuts, bulges, and punctures, and compute wear uniformity metrics that indicate alignment or inflation problems. This application is covered by a pending U.S. patent application:

The problem. Unmanned aircraft systems (UAS) equipped with multispectral cameras have become a standard tool in precision agriculture for monitoring crop health, detecting early-stage disease and stress, guiding variable-rate fertilizer application, and estimating yield. The spectral reflectance indices computed from multispectral imagery — including the Normalized Difference Vegetation Index (NDVI), the Red Edge Chlorophyll Index, and dozens of others — are well-validated proxies for plant physiological parameters including chlorophyll content, leaf area index, canopy nitrogen status, and water stress. However, the accuracy of these indices depends critically on the spectral and spatial calibration of the UAS-mounted sensor, which is technically challenging: sensor response varies with temperature, illumination angle, and flight altitude; geometric distortions from the UAS platform require correction; and the rapid motion of the aircraft demands fast, robust calibration procedures that can be applied automatically in the field without specialized equipment. Our approach. Funded by a USDA/NIFA grant ($613K, 2023–2027) in collaboration with Professors Sama and Bailey in the University of Kentucky's College of Agriculture, Food and Environment, my group is developing improved calibration methods for UAS-mounted multispectral cameras. The technical work draws on the coded aperture design and spectral reconstruction methods developed in Thrust 3, adapted to the specific constraints of UAS deployment: miniaturized sensors, wide illumination variation, fast acquisition, and the need for robust real-time processing on embedded hardware. Specific contributions include:

The problem. The electrical power grid is the largest and most complex machine ever built, and its reliable operation depends on accurate, real-time knowledge of the state of thousands of distributed assets — transformers, feeders, substations, and transmission lines — whose condition and loading change continuously with weather, demand patterns, and generation mix. Utilities currently rely on sparse, infrequent manual inspections and on coarse aggregate measurements that cannot distinguish the contributions of individual assets. As the grid integrates more distributed renewable generation, electric vehicles, and flexible loads, the need for fine-grained, sensor-based asset monitoring becomes more urgent. Our approach. Funded by a U.S. Department of Energy grant ($1M, 2025–2027) in collaboration with Professor Liao in the University of Kentucky's Department of Electrical and Computer Engineering, this project develops signal processing and machine learning methods for extracting actionable intelligence from sensor data collected at grid assets. Specific technical contributions include:

The problem. Emergency airway management — securing an airway in a patient who cannot breathe independently — is one of the most time-critical and technically demanding procedures in emergency medicine. Endotracheal intubation requires inserting a tube through the mouth, past the vocal cords, and into the trachea, often under suboptimal conditions with limited visibility. Training for this procedure relies on physical mannequins and simulation, but standard mannequins provide no visual feedback about the spatial relationship between the intubation tool and the relevant anatomy. Augmented reality overlays that project anatomical guidance directly onto the mannequin's surface could significantly improve the efficiency and effectiveness of intubation training. Our approach. Funded by the University of Kentucky Vice President for Research and College Deans Igniting Research Collaborations (IRC) program ($36K, 2020–2021), this project developed a proof-of-concept spatial augmented reality system for intubation training. A structured light smart camera — of the type developed in the SAR work described in Thrust 1 — first scanned the mannequin to build a precise 3D surface model, then projected anatomically accurate overlays — the trachea, vocal cords, and cricothyroid membrane — registered to the mannequin's physical geometry in real time. A first responder trainee could see the relevant anatomy highlighted directly on the mannequin's surface without wearing any headgear, enabling natural, unencumbered practice of the intubation technique with immediate visual feedback. This project was a collaboration with medical faculty in the University of Kentucky's College of Medicine and demonstrated the clinical training potential of the SAR platform developed in Thrust 1. Augmented Reality for Autonomous Vehicle Simulation (2019)

The background. Spatial augmented reality has an application in autonomous vehicle development that is less obvious but technically compelling: the ability to project virtual objects — pedestrians, cyclists, road markings, traffic signs — onto a physical test track or indoor driving environment, creating mixed-reality test scenarios that combine real vehicle dynamics with virtual environmental content. This approach enables testing of perception and decision algorithms under controlled, repeatable conditions that would be dangerous or expensive to reproduce in the real world. My group's structured light SAR platform is directly applicable to this use case, projecting dynamic virtual content onto road surfaces and barriers in a test environment at the resolution and frame rate needed to appear realistic to a vehicle's camera and lidar sensors. This application is currently an area of exploratory development. Summary of Applied Vision Projects

A Note on Interdisciplinary Collaboration

The diversity of applications on this page — printing, defense, medicine, agriculture, transportation, energy — reflects a deliberate strategy rather than opportunism. Each of these collaborations was initiated because a colleague in another field recognized that a problem they could not solve with their own tools could potentially be addressed with the 3D imaging, depth sensing, or machine vision methods developed in my laboratory. In every case, the collaboration produced not only a publication or a funded project but also new technical challenges that enriched the core research program. The dairy farming work motivated improvements in depth camera calibration for non-cooperative subjects. The vocal fold work pushed structured light miniaturization toward endoscopic dimensions. The rail crossing work required new point cloud analysis methods for irregular surface profiling. The drug discovery work introduced problems in biological image segmentation that informed later work in spectral imaging. This cross-pollination between applied and fundamental research is, in my view, one of the most productive dynamics in engineering science — and one that I work to cultivate deliberately in my research group and in the graduate students I mentor.

The problem. A sniper firing from a concealed position is one of the most dangerous threats faced by military and law enforcement personnel in urban environments. Acoustic gunshot detection systems can localize the muzzle blast but are confused by echoes and by suppressed weapons. An alternative approach is to detect the bullet itself in flight and back-project its trajectory to the shooter's position — but a supersonic bullet is small, fast, and produces only a faint thermal signature. Our approach. Beginning in 2004, I led the University of Kentucky's component of a multi-institution program funded by the U.S. Marine Corps and later the U.S. Air Force, in partnership with M2 Technologies, CABEM Technologies, and Lockheed Martin. The program applied mid-infrared (mid-IR) camera technology — sensitive to wavelengths between 3 and 5 microns where aerodynamic heating of a supersonic projectile produces a detectable thermal signature — to bullet-in-flight tracking and sniper localization. Technical approach. The core machine vision challenge was detecting a small, fast-moving thermal target against a cluttered background in real time. A supersonic bullet travels at roughly 900 meters per second, crossing the field of view of a mid-IR camera in a few milliseconds. The detection algorithm had to distinguish the bullet's thermal signature from background clutter — warm surfaces, atmospheric turbulence, birds, vehicles — while operating fast enough to track the bullet across multiple frames before it exited the field of view. Once detected and tracked across multiple camera frames, the bullet's 3D trajectory was recovered by triangulation from multiple calibrated camera positions, and the trajectory was back-projected to estimate the shooter's position. Program outcomes. Conducted in three phases from 2004 to 2010, the program produced a portable, ruggedized anti-sniper detection system capable of detecting bullets in flight at ranges exceeding 200 meters. The University of Kentucky's total funding exceeded $2M within a program that eclipsed $10M across all partners. The final systems were delivered to the U.S. Air Force.

Royce Mohan with the automated vascular patterning assay system (NIH, 2005–2011).

Royce Mohan with the automated vascular patterning assay system (NIH, 2005–2011).

The problem. Drug discovery pipelines rely on high-throughput screening (HTS) — the automated testing of thousands to millions of chemical compounds against a biological target to identify candidates for further development. A critical step in many HTS assays is the quantitative analysis of microscopy images: counting cells, measuring their morphology, detecting fluorescent markers, and classifying their responses to candidate compounds. Automating this analysis with sufficient accuracy and speed to keep pace with modern HTS robots is a significant machine vision challenge. Our approach. In collaboration with Professor Royce Mohan at the University of Connecticut Health Center (formerly at the University of Kentucky), my group developed machine vision algorithms for automated analysis of vascular patterning assays — biological assays that measure the ability of candidate compounds to inhibit or promote the growth of blood vessels, which is relevant to cancer treatment. The assays produce microscopy images of endothelial cell networks, and the machine vision system had to segment, measure, and classify these networks automatically and reproducibly. Technical contributions. The key machine vision challenges were:

- Segmenting tubular vascular structures from noisy fluorescence microscopy images with widely varying staining quality

- Extracting quantitative morphological features — total tube length, branch point density, network connectivity — that correlate with biological activity

- Processing large numbers of images (thousands per screening run) with sufficient throughput to be compatible with the HTS pipeline

- P. Bargagna-Mohan et al., “Withaferin A Targets Intermediate Filaments Glial Fibrillary Acidic Protein and Vimentin in a Model of Retinal Gliosis,” Journal of Biological Chemistry, 285, pp. 7657–7669, 2010.

- P. Bargagna-Mohan et al., “A Corneal Anti-Fibrotic Switch Identified in Genetic and Pharmacological Deficiency of Vimentin,” Journal of Biological Chemistry, 2011.

- R. R. Paranthan, P. Bargagna-Mohan, D. L. Lau, and R. Mohan, “A Robust Model to Simultaneously Induce Corneal Neovascularization and Retinal Gliosis in the Mouse Eye,” Molecular Vision, vol. 17, pp. 1901–1908, 2011.

The problem. Accurate measurement of vocal fold vibration is essential for diagnosing and treating voice disorders in children. Standard clinical assessment uses stroboscopic video laryngoscopy — a camera inserted into the throat that captures slow-motion video of the vocal folds vibrating during phonation. However, standard video provides only a 2D view of the vocal fold surface and cannot directly measure the 3D displacement, velocity, or surface deformation of the folds during vibration. Our approach. In collaboration with Professor Ronald Patel in the University of Kentucky's Department of Communication Sciences and Disorders and Professor Kevin Donohue in ECE, we applied structured light laser projection to measure the 3D surface motion of pediatric vocal folds in vivo. A narrow laser line was projected across the vocal fold surface during laryngoscopy, and the deformation of the reflected laser line was analyzed frame by frame to recover the 3D surface profile as a function of time during the vibration cycle. This provided quantitative 3D kinematic data — displacement amplitude, asymmetry, mucosal wave velocity — that is not accessible from standard 2D video:

- R. R. Patel, K. D. Donohue, D. L. Lau, and H. Unnikrishnan, “In Vivo Measurement of Pediatric Vocal Fold Motion Using Structured Light Laser Projection,” Journal of Voice, vol. 27, no. 4, July 2013, pp. 463–472.

Student engineering team with the dual-projector structured light scanner for railroad track crossing assessment.

Student engineering team with the dual-projector structured light scanner for railroad track crossing assessment.

- T. Wang, R. R. Souleyrette, A. K. Aboubakr, and D. L. Lau, “A Dynamic Model for Quantifying Rail-Highway Grade Crossing Roughness,” Journal of Transportation Safety and Security, November 2015.

- T. Wang, R. R. Souleyrette, D. L. Lau, and X. Peng, “Rail Highway Grade Crossing Roughness Quantitative Measurement Using 3D Technology,” Proceedings of the Joint Rail Conference, JRC 2014-3778, Colorado Springs, April 2014.

- T. Wang, R. R. Souleyrette, D. L. Lau, A. Aboubakr, and E. Randerson, “Quantifying Rail-Highway Grade Crossing Roughness Accelerations and Dynamic Modeling,” Transportation Research Board 94th Annual Meeting, No. TRB15-4825, Washington DC, 2015.

Machine vision system monitoring dairy cows with a network of TOF cameras at the parlor exit.

Machine vision system monitoring dairy cows with a network of TOF cameras at the parlor exit.

The problem. In modern commercial dairy farming, individual cow health and productivity are strongly influenced by body condition — a measure of fat reserves analogous to a body mass index for cattle. Body condition scoring (BCS) is a standard practice in which a trained technician assigns each cow a score from 1 (emaciated) to 5 (obese) based on visual and tactile assessment of specific anatomical landmarks. BCS is a strong predictor of reproductive performance, milk yield, and disease risk. However, manual BCS is labor-intensive, subjective, and inconsistent across scorers — a farm with hundreds of cows cannot practically score every animal every day. Our approach. In collaboration with Professor Jeffrey Bewley in the University of Kentucky's Department of Animal and Food Sciences, and funded by the Kentucky Science and Technology Company, we developed an automated BCS system based on depth camera imaging. A depth camera mounted above the milking parlor exit captures a 3D point cloud of each cow's dorsal surface as she walks through. Machine vision algorithms segment the cow from the background, extract the anatomical landmarks used in manual BCS — the spine, pin bones, hook bones, and tail head — and compute a predicted BCS from the 3D geometry of these landmarks. The system processes each cow automatically as she passes through the milking parlor, requiring no additional labor and producing consistent, reproducible scores that can be tracked over time to detect trends in herd health. This work was extended to automated feed intake measurement by M.S. student Anthony Shelly:

- A. N. Shelley, D. L. Lau, A. E. Stone, and J. M. Bewley, “Short Communication: Measuring Feed Volume and Weight by Machine Vision,” Journal of Dairy Science, vol. 99, no. 1, 2016, pp. 1–6.

- K. Zhao, A. N. Shelley, D. L. Lau, K. A. Dolecheck, and J. M. Bewley, “Automatic Body Condition Scoring System for Dairy Cows Based on Depth-Image Analysis,” International Journal of Agricultural and Biological Engineering, vol. 13, no. 4, pp. 45–54, August 2020.

Senior design team with the self-contained body condition scoring system at the UK dairy.

Senior design team with the self-contained body condition scoring system at the UK dairy.

The problem. Root architecture — the spatial distribution, depth, branching pattern, and volume of a plant's root system — is a critical but poorly understood determinant of crop performance under stress. Roots that reach deeper into the soil profile can access water and nutrients unavailable to shallow-rooted plants, making root architecture a target for breeding programs focused on drought tolerance and nitrogen use efficiency. However, measuring root architecture in large plants is technically difficult: roots are underground, extraction damages them, and manual measurement is prohibitively slow for the large sample sizes needed in breeding programs. Our approach. In collaboration with faculty in the University of Kentucky's College of Agriculture, we developed a machine vision pipeline for non-destructive 3D phenotyping of root systems in large plants. Excavated root crowns were imaged using structured light and depth sensing to produce accurate 3D point clouds of the root architecture. Custom algorithms segmented individual roots from the point cloud, measured root angles, diameters, and lengths, and computed summary statistics relevant to agronomic performance:

- B. Rinehart, H. Poffenbarger, D. L. Lau, D. McNear, “A Method for Phenotyping Roots of Large Plants,” The Plant Phenome Journal, vol. 5, no. 1, pp. e20041, 2022.

The problem. Facial asymmetry — differences in the size, shape, or position of facial structures between the left and right sides — is clinically significant in orthodontics, oral surgery, and reconstructive procedures. Quantitative measurement of facial asymmetry traditionally relies on 2D cephalometric radiographs, which cannot capture the full 3D geometry of the face and skull. 3D facial scanning using structured light or stereophotogrammetry provides a complete surface model of the face, but automated analysis of this data for asymmetry assessment requires robust algorithms for landmark detection, surface registration, and asymmetry quantification. Our approach. In collaboration with clinical faculty in the University of Kentucky's College of Dentistry, we developed an AI-powered pipeline for precision assessment of facial asymmetry from 3D facial scans, combining deep learning landmark detection with 3D surface analysis:

- M. Adel, K. J. Hunt, D. L. Lau, J. K. Hartsfield, H. Reyes-Centeno, C. S. Beeman, T. Elshebiny, and L. Sharab, “Precision Assessment of Facial Asymmetry Using 3D Imaging and Artificial Intelligence,” Journal of Clinical Medicine, 2025.

An autonomous robot being equipped with a 3D imaging system, 2016.

An autonomous robot being equipped with a 3D imaging system, 2016.

The problem. Tire condition — tread depth, sidewall damage, and structural integrity — is a critical safety parameter for vehicles ranging from passenger cars to commercial trucks and aircraft. Current tire inspection is largely manual and subjective, relying on visual assessment by a technician and periodic depth gauge measurements at discrete points on the tread surface. This approach is slow, inconsistent across inspectors, and unable to detect subtle subsurface damage or distributed wear patterns that develop across the full tire surface. A contactless, automated 3D inspection system could provide consistent, quantitative tire condition assessment at vehicle speeds, enabling continuous fleet monitoring without disrupting operations. Our approach. This work grows directly from the structured light 3D measurement technology developed in Thrust 1, applied to the specific geometric and operational constraints of tire inspection. A structured light sensor mounted at a service bay or roadway checkpoint captures the full 3D surface geometry of a tire as the vehicle passes over it at low speed. The resulting point cloud is analyzed to extract tread depth maps across the full tire width, detect localized damage features such as cuts, bulges, and punctures, and compute wear uniformity metrics that indicate alignment or inflation problems. This application is covered by a pending U.S. patent application:

- A. Sworski, R. England, J. D. Dambacher, M. Bellis, D. L. Lau, E. Crane, and S. Thomas, Contactless Tire Inspection, U.S. Patent Application 20230391144, December 7, 2023.

The problem. Unmanned aircraft systems (UAS) equipped with multispectral cameras have become a standard tool in precision agriculture for monitoring crop health, detecting early-stage disease and stress, guiding variable-rate fertilizer application, and estimating yield. The spectral reflectance indices computed from multispectral imagery — including the Normalized Difference Vegetation Index (NDVI), the Red Edge Chlorophyll Index, and dozens of others — are well-validated proxies for plant physiological parameters including chlorophyll content, leaf area index, canopy nitrogen status, and water stress. However, the accuracy of these indices depends critically on the spectral and spatial calibration of the UAS-mounted sensor, which is technically challenging: sensor response varies with temperature, illumination angle, and flight altitude; geometric distortions from the UAS platform require correction; and the rapid motion of the aircraft demands fast, robust calibration procedures that can be applied automatically in the field without specialized equipment. Our approach. Funded by a USDA/NIFA grant ($613K, 2023–2027) in collaboration with Professors Sama and Bailey in the University of Kentucky's College of Agriculture, Food and Environment, my group is developing improved calibration methods for UAS-mounted multispectral cameras. The technical work draws on the coded aperture design and spectral reconstruction methods developed in Thrust 3, adapted to the specific constraints of UAS deployment: miniaturized sensors, wide illumination variation, fast acquisition, and the need for robust real-time processing on embedded hardware. Specific contributions include:

- Vicarious radiometric calibration methods that use ground-based reference targets of known reflectance to correct for sensor response variation across flights and illumination conditions, without requiring laboratory recalibration of the sensor

- Geometric correction algorithms that compensate for rolling shutter distortion, vibration-induced motion blur, and lens distortion in miniaturized wide-angle optics

- Spectral unmixing approaches that separate the contributions of multiple plant and soil materials to mixed pixels at the spatial resolution of the UAS sensor, enabling sub-pixel-scale agronomic analysis

The problem. The electrical power grid is the largest and most complex machine ever built, and its reliable operation depends on accurate, real-time knowledge of the state of thousands of distributed assets — transformers, feeders, substations, and transmission lines — whose condition and loading change continuously with weather, demand patterns, and generation mix. Utilities currently rely on sparse, infrequent manual inspections and on coarse aggregate measurements that cannot distinguish the contributions of individual assets. As the grid integrates more distributed renewable generation, electric vehicles, and flexible loads, the need for fine-grained, sensor-based asset monitoring becomes more urgent. Our approach. Funded by a U.S. Department of Energy grant ($1M, 2025–2027) in collaboration with Professor Liao in the University of Kentucky's Department of Electrical and Computer Engineering, this project develops signal processing and machine learning methods for extracting actionable intelligence from sensor data collected at grid assets. Specific technical contributions include:

- Load disaggregation algorithms that separate the contributions of individual end-use loads — HVAC systems, electric vehicle chargers, industrial motors — from aggregate smart meter measurements, using non-negative matrix factorization and graph-based inference

- Capacity utilization modeling that estimates the thermal loading and remaining useful life of distribution transformers from time-series current and voltage measurements, without requiring physical inspection

- Event detection and localization methods that identify and locate fault events — incipient insulation failures, conductor contacts, cable degradation — from the transient signatures they produce in voltage and current waveforms measured at accessible grid nodes

The problem. Emergency airway management — securing an airway in a patient who cannot breathe independently — is one of the most time-critical and technically demanding procedures in emergency medicine. Endotracheal intubation requires inserting a tube through the mouth, past the vocal cords, and into the trachea, often under suboptimal conditions with limited visibility. Training for this procedure relies on physical mannequins and simulation, but standard mannequins provide no visual feedback about the spatial relationship between the intubation tool and the relevant anatomy. Augmented reality overlays that project anatomical guidance directly onto the mannequin's surface could significantly improve the efficiency and effectiveness of intubation training. Our approach. Funded by the University of Kentucky Vice President for Research and College Deans Igniting Research Collaborations (IRC) program ($36K, 2020–2021), this project developed a proof-of-concept spatial augmented reality system for intubation training. A structured light smart camera — of the type developed in the SAR work described in Thrust 1 — first scanned the mannequin to build a precise 3D surface model, then projected anatomically accurate overlays — the trachea, vocal cords, and cricothyroid membrane — registered to the mannequin's physical geometry in real time. A first responder trainee could see the relevant anatomy highlighted directly on the mannequin's surface without wearing any headgear, enabling natural, unencumbered practice of the intubation technique with immediate visual feedback. This project was a collaboration with medical faculty in the University of Kentucky's College of Medicine and demonstrated the clinical training potential of the SAR platform developed in Thrust 1. Augmented Reality for Autonomous Vehicle Simulation (2019)

The background. Spatial augmented reality has an application in autonomous vehicle development that is less obvious but technically compelling: the ability to project virtual objects — pedestrians, cyclists, road markings, traffic signs — onto a physical test track or indoor driving environment, creating mixed-reality test scenarios that combine real vehicle dynamics with virtual environmental content. This approach enables testing of perception and decision algorithms under controlled, repeatable conditions that would be dangerous or expensive to reproduce in the real world. My group's structured light SAR platform is directly applicable to this use case, projecting dynamic virtual content onto road surfaces and barriers in a test environment at the resolution and frame rate needed to appear realistic to a vehicle's camera and lidar sensors. This application is currently an area of exploratory development. Summary of Applied Vision Projects

| Project | Collaborator(s) | Sponsor | Funding | Period |

|---|---|---|---|---|

| Anti-Sniper Mid-IR Detection | M2 Tech., CABEM, Lockheed Martin | DoD / USMC / USAF | $2M+ (UK) | 2004–2010 |

| Drug Discovery HTS | Prof. R. Mohan (UConn Health) | NIH NCI / NINDS | $1.06M | 2005–2011 |

| Vocal Fold Motion | Prof. R. Patel, Prof. K. Donohue (UK) | Internal | — | 2012–2013 |

| Rail Crossing Assessment | Prof. R. Souleyrette (UK) | NURail / Univ. Illinois | $296K | 2012–2016 |

| Dairy Cow BCS and Feed Intake | Prof. J. Bewley (UK Agriculture) | KY Science and Technology Co. | $50K | 2012–2014 |

| Plant Root Phenotyping | Prof. D. McNear (UK Agriculture) | Internal | — | 2020–2022 |

| AR Intubation Training | UK College of Medicine | UK IRC (internal) | $36K | 2020–2021 |

| Facial Asymmetry Assessment | UK College of Dentistry | Internal | — | 2024–2025 |

| Contactless Tire Inspection | Industrial partners | Industry | — | 2023–present |

| UAS Precision Agriculture | Prof. Sama, Prof. Bailey (UK Agriculture) | USDA/NIFA | $613K | 2023–2027 |

| Grid Asset Monitoring | Prof. Liao (UK ECE) | U.S. Dept. of Energy | $1.0M | 2025–2027 |

The diversity of applications on this page — printing, defense, medicine, agriculture, transportation, energy — reflects a deliberate strategy rather than opportunism. Each of these collaborations was initiated because a colleague in another field recognized that a problem they could not solve with their own tools could potentially be addressed with the 3D imaging, depth sensing, or machine vision methods developed in my laboratory. In every case, the collaboration produced not only a publication or a funded project but also new technical challenges that enriched the core research program. The dairy farming work motivated improvements in depth camera calibration for non-cooperative subjects. The vocal fold work pushed structured light miniaturization toward endoscopic dimensions. The rail crossing work required new point cloud analysis methods for irregular surface profiling. The drug discovery work introduced problems in biological image segmentation that informed later work in spectral imaging. This cross-pollination between applied and fundamental research is, in my view, one of the most productive dynamics in engineering science — and one that I work to cultivate deliberately in my research group and in the graduate students I mentor.