Structured light 3D imaging is a form of active triangulation in which a projector casts a carefully designed pattern of light onto a scene while one or more synchronized cameras capture the resulting images. Because the projector and camera are physically separated, any surface in the scene causes the projected pattern to appear geometrically distorted when viewed from the camera's perspective. By analyzing this distortion — specifically by measuring how much each point in the projected pattern has shifted from its expected position — one can recover the 3D coordinates of every visible point on the surface. The result is a dense, accurate point cloud or depth map that can be used for inspection, recognition, display, or fabrication.

Structured light is distinguished from passive stereo vision (which relies on finding matching features between two cameras) and from time-of-flight sensing (which measures round-trip travel time of a light pulse) by its combination of high spatial resolution, robustness to texture-poor surfaces, and compatibility with high-speed operation. My group has worked on structured light systems continuously since 2002, contributing to pattern design, calibration, phase unwrapping, real-time reconstruction, multi-path correction, and spatial augmented reality. This page describes the major phases of that work.

Minoru Niimura and his daughter with the first real-time structured light system at a machine vision show in Yokohama, Japan.

Background: How Structured Light Works

Minoru Niimura and his daughter with the first real-time structured light system at a machine vision show in Yokohama, Japan.

Background: How Structured Light Works

The most common and accurate class of structured light systems uses phase-shifting profilometry. Rather than projecting a single pattern, the system projects a sequence of sinusoidal fringe patterns, each shifted in phase by a fixed amount. For each pixel in the camera image, the relative intensities across the sequence encode a phase value that corresponds to the projected column (or row) of the projector that illuminated that pixel. Once phase is known for every camera pixel, triangulation geometry converts phase directly to 3D coordinates. The elegance of phase-shifting lies in its noise averaging — by using multiple images, random photon noise is suppressed — and in its sub-pixel accuracy, which routinely achieves depth resolution of a fraction of a millimeter at standoff distances of a meter or more. The challenges lie in phase unwrapping (sinusoidal phase is inherently periodic, so resolving ambiguity across multiple fringes requires additional information), nonlinearity correction (projector gamma response distorts the sinusoidal patterns), multi-path interference (surfaces that reflect light onto each other corrupt the phase measurement), calibration (projector and camera lens distortions must be precisely characterized), and speed (acquiring multiple sequential patterns limits frame rate for moving objects). My group has made original contributions to all of these challenges. Phase 1: Real-Time Structured Light and the Composite Pattern Approach (2002–2007)

The earliest work in my group, carried out with Prof. Laurence Hassebrook and funded by a NASA STTR Phase I award, addressed the problem of recovering 3D structure from a single projected pattern rather than a time-consuming sequence. We developed the composite structured light approach, in which multiple sinusoidal gratings at different spatial frequencies and orientations are superimposed into a single projected image. A camera captures one image, and digital signal processing demodulates the individual frequency components to recover depth across the scene. This approach enabled real-time 3D video acquisition — capturing, processing, and displaying point clouds at video frame rates — which was a significant advance at the time. Key publication:

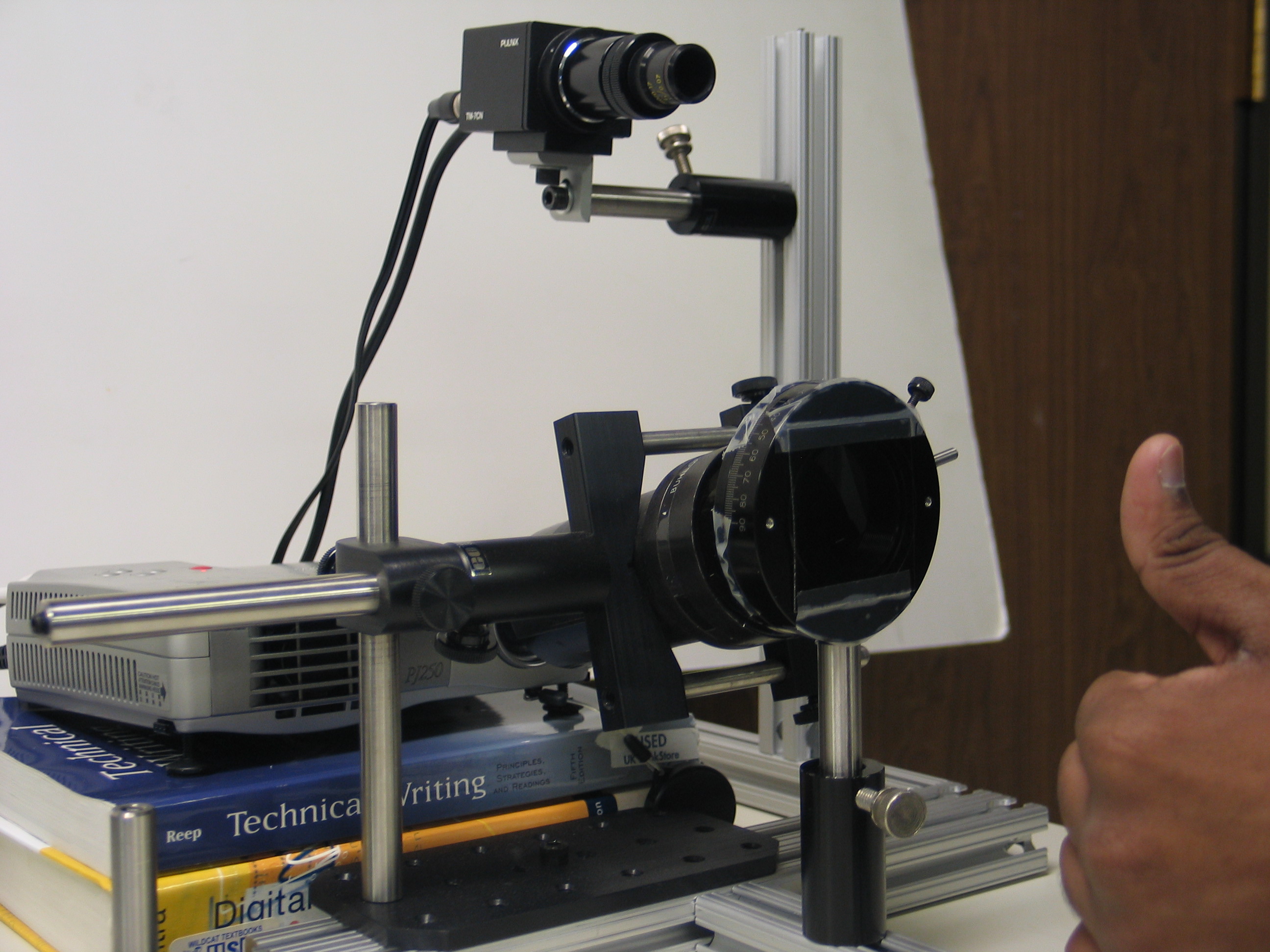

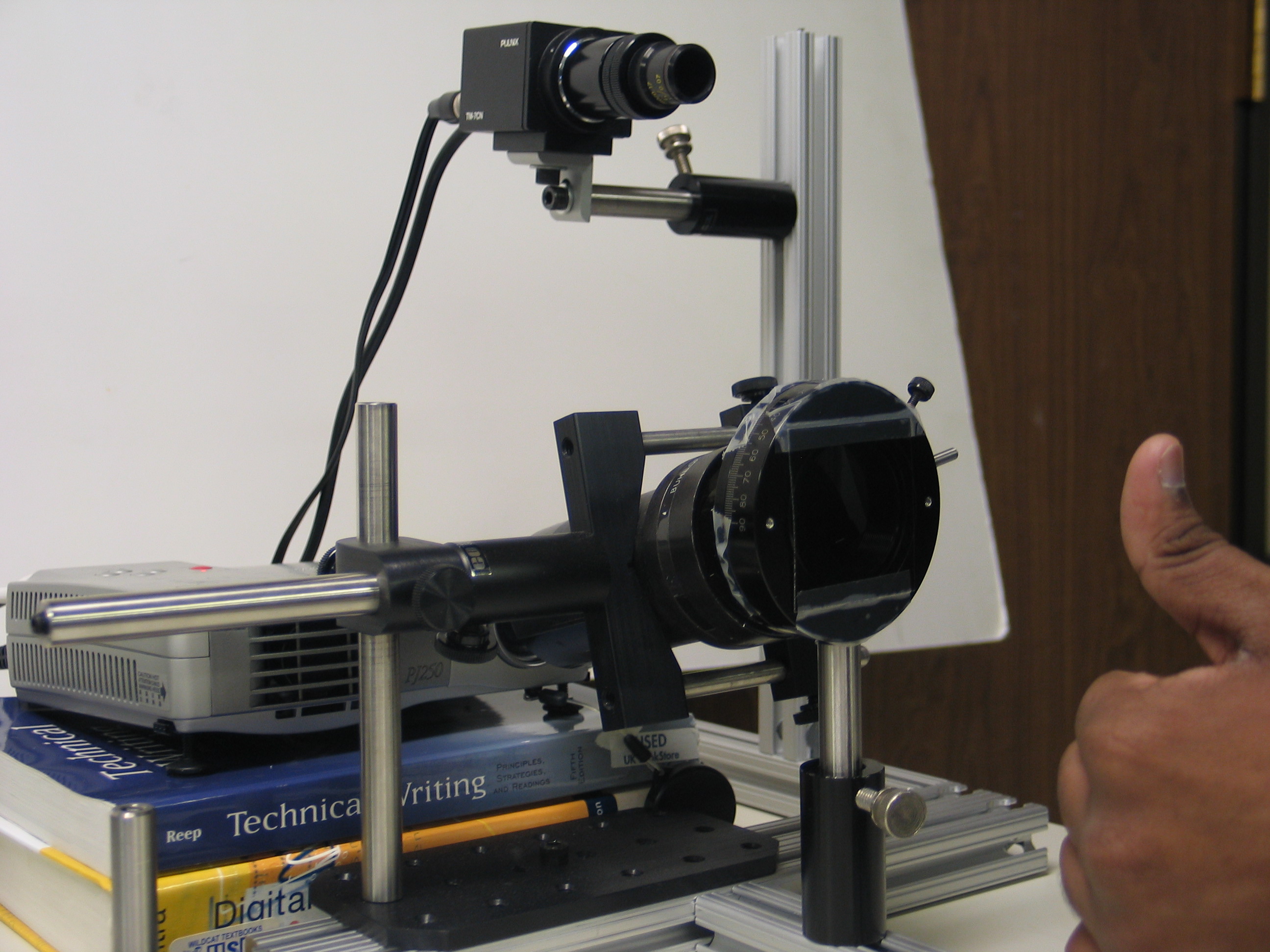

The very first prototype of the 3D non-contact fingerprint scanner.

Phase 2: Non-Contact 3D Fingerprint Scanning (2005–2010)

The very first prototype of the 3D non-contact fingerprint scanner.

Phase 2: Non-Contact 3D Fingerprint Scanning (2005–2010)

Conventional fingerprint scanners require a finger to be pressed flat against a glass platen — a process that introduces distortion from skin deformation and that raises hygiene concerns in high-throughput environments such as border crossing and access control. The NIJ Fast Fingerprint Capture Program challenged researchers to develop a scanner capable of capturing all ten fingers in under thirty seconds without contact. Working with Prof. Hassebrook and funded through the National Institutes for Hometown Security ($988K), my group proposed using structured light to capture the full 3D geometry of each finger in a single flash acquisition, then mathematically unrolling the curved fingerprint surface into a flat 2D image compatible with existing matching databases. This was a novel and, at the time, controversial idea — the fingerprint community had little experience with 3D data. We were selected as one of only four teams in the program and the only academic team in the Southeast, alongside Carnegie Mellon University. The core technical challenges were:

Two early Seikowave scanners. The ruggedized unit (right) survives a 1-meter drop onto concrete without recalibration.

Phase 3: High-Speed 3D Shape Measurement and the Dual-Frequency Pattern (2008–2013)

Two early Seikowave scanners. The ruggedized unit (right) survives a 1-meter drop onto concrete without recalibration.

Phase 3: High-Speed 3D Shape Measurement and the Dual-Frequency Pattern (2008–2013)

Phase-shifting systems require projecting and capturing a minimum of three sequential patterns to recover phase, which limits frame rate. For structured light to be useful in industrial inspection of moving parts, robotic guidance, or real-time human-computer interaction, the entire acquisition-processing-display pipeline must operate at or above video frame rates. We developed the dual-frequency pattern scheme, in which two sets of sinusoidal patterns at different spatial frequencies are interleaved in a carefully designed projection sequence. The lower frequency provides an unambiguous coarse phase estimate that resolves the periodicity ambiguity of the higher-frequency measurement, which in turn provides the fine-resolution depth map. By optimizing the number of patterns, their frequencies, and their phase shifts, we achieved structured light acquisition, processing, and 3D point cloud display at over 150 frames per second — at the time far beyond any published result — using commodity DLP projectors and standard frame grabber hardware. Key publications:

In parallel with the structured light program, I led a $2M+ program at the University of Kentucky as part of a $10M+ multi-partner effort with M2 Technologies, CABEM Technologies, and Lockheed Martin, funded by the U.S. Marine Corps and later the U.S. Air Force. The program applied mid-infrared (mid-IR) camera technology to detect and track bullets in flight as a method of real-time sniper localization. The core technical challenge was that a supersonic bullet in flight produces a faint but detectable mid-IR signature from aerodynamic heating and bow shock. By deploying an array of calibrated mid-IR cameras with overlapping fields of view, the system could triangulate bullet trajectory in 3D and back-project to the shooter's position within seconds of the first shot. Conducted in three phases from 2004 to 2010, the program produced a portable, ruggedized anti-sniper system capable of detecting bullets in flight at ranges exceeding 200 meters. The final systems were delivered to the U.S. Air Force.

PhD student Ying Yu with the back-lit caltag calibration pattern for structured light scanners.

Phase 5: Calibration, Multi-Path Correction, and Epipolar Geometry (2014–2024)

PhD student Ying Yu with the back-lit caltag calibration pattern for structured light scanners.

Phase 5: Calibration, Multi-Path Correction, and Epipolar Geometry (2014–2024)

As structured light systems matured from laboratory demonstrations to deployed products, the field's attention shifted from raw speed to accuracy and robustness — particularly in challenging scenes with inter-reflections, subsurface scattering, and projector nonlinearity. Projector gamma response causes the projected sinusoidal patterns to be distorted, introducing systematic phase errors. We developed an efficient online nonlinearity calibration algorithm:

A student demonstrates the digital face paint system, projecting Pennywise in real-time registration with facial expressions.

Phase 6: Spatial Augmented Reality (2017–Present)

A student demonstrates the digital face paint system, projecting Pennywise in real-time registration with facial expressions.

Phase 6: Spatial Augmented Reality (2017–Present)

Spatial augmented reality (SAR) — also called projection mapping or shader lamps — is the complement of head-mounted AR: rather than overlaying digital content on a display worn by the user, SAR projects digital content directly onto physical surfaces, making the surfaces themselves appear to change color, texture, or shape. Unlike a standard video projector, a SAR system must account precisely for the 3D geometry of the projection surface so that the projected image appears geometrically correct from the viewer's perspective. This requires the projector to know the 3D structure of the scene — exactly the information that a structured light scanner provides. The natural convergence of structured light 3D sensing and SAR display is the core idea behind my current research direction: a single device that first scans the scene with structured light to build a precise 3D model, then immediately switches to SAR display mode to project geometrically corrected digital content onto that scene in real time. This enables applications ranging from interactive design visualization and surgical training to museum installations and industrial assembly guidance. My group developed a structured light smart camera architecture that integrates the projector, camera, and processing pipeline into a single compact unit capable of both 3D scanning and SAR display. M.S. student Matthew Ruffner completed his thesis on the machine vision camera design in 2018, and Ph.D. student Ying Yu completed the first full dissertation on this topic in 2019. A proof-of-concept SAR system for augmented reality intubation training for first responders was developed with internal seed funding from the University of Kentucky Vice President for Research and College Deans Igniting Research Collaborations (IRC) program ($36K, 2020–2021). This project demonstrated that a structured light SAR system could register digital anatomical overlays onto a physical training mannequin in real time, providing visual guidance to first responders learning emergency airway management — a compelling early application of the technology to clinical training. An interdisciplinary extension of this SAR work is the Digital Skin project — a collaboration with artist Siavash Tohidi from UK's School of Art and Visual Studies and Dr. Michael Winkler from the Department of Radiology. The system uses an Intel RealSense depth camera with OpenCV-based face detection to track a subject's facial features in real time, then projects digital masks and textures onto the face using a smart laser projector with built-in eye-safety features. The project explores the interchangeability of digital and physical identity, and demonstrates how structured light SAR technology can bridge engineering, art, and medicine. Key conference publications from this phase:

This research thrust is protected by eleven U.S. patents covering structured light pattern design, real-time 3D reconstruction, projector-camera calibration, non-contact fingerprint scanning, and SAR display systems, including:

Funding Summary for This Thrust

Graduate Alumni from This Thrust

Minoru Niimura and his daughter with the first real-time structured light system at a machine vision show in Yokohama, Japan.

Minoru Niimura and his daughter with the first real-time structured light system at a machine vision show in Yokohama, Japan.

The most common and accurate class of structured light systems uses phase-shifting profilometry. Rather than projecting a single pattern, the system projects a sequence of sinusoidal fringe patterns, each shifted in phase by a fixed amount. For each pixel in the camera image, the relative intensities across the sequence encode a phase value that corresponds to the projected column (or row) of the projector that illuminated that pixel. Once phase is known for every camera pixel, triangulation geometry converts phase directly to 3D coordinates. The elegance of phase-shifting lies in its noise averaging — by using multiple images, random photon noise is suppressed — and in its sub-pixel accuracy, which routinely achieves depth resolution of a fraction of a millimeter at standoff distances of a meter or more. The challenges lie in phase unwrapping (sinusoidal phase is inherently periodic, so resolving ambiguity across multiple fringes requires additional information), nonlinearity correction (projector gamma response distorts the sinusoidal patterns), multi-path interference (surfaces that reflect light onto each other corrupt the phase measurement), calibration (projector and camera lens distortions must be precisely characterized), and speed (acquiring multiple sequential patterns limits frame rate for moving objects). My group has made original contributions to all of these challenges. Phase 1: Real-Time Structured Light and the Composite Pattern Approach (2002–2007)

The earliest work in my group, carried out with Prof. Laurence Hassebrook and funded by a NASA STTR Phase I award, addressed the problem of recovering 3D structure from a single projected pattern rather than a time-consuming sequence. We developed the composite structured light approach, in which multiple sinusoidal gratings at different spatial frequencies and orientations are superimposed into a single projected image. A camera captures one image, and digital signal processing demodulates the individual frequency components to recover depth across the scene. This approach enabled real-time 3D video acquisition — capturing, processing, and displaying point clouds at video frame rates — which was a significant advance at the time. Key publication:

- C. Guan, L. G. Hassebrook, and D. L. Lau, "Composite Structured Light Pattern for Three-Dimensional Video," Optics Express, vol. 11, no. 5, March 2003, pp. 406–417.

The very first prototype of the 3D non-contact fingerprint scanner.

The very first prototype of the 3D non-contact fingerprint scanner.

Conventional fingerprint scanners require a finger to be pressed flat against a glass platen — a process that introduces distortion from skin deformation and that raises hygiene concerns in high-throughput environments such as border crossing and access control. The NIJ Fast Fingerprint Capture Program challenged researchers to develop a scanner capable of capturing all ten fingers in under thirty seconds without contact. Working with Prof. Hassebrook and funded through the National Institutes for Hometown Security ($988K), my group proposed using structured light to capture the full 3D geometry of each finger in a single flash acquisition, then mathematically unrolling the curved fingerprint surface into a flat 2D image compatible with existing matching databases. This was a novel and, at the time, controversial idea — the fingerprint community had little experience with 3D data. We were selected as one of only four teams in the program and the only academic team in the Southeast, alongside Carnegie Mellon University. The core technical challenges were:

- Designing projected patterns that could be captured in a single camera frame at sufficient resolution to resolve fingerprint ridge detail (ridge spacing of approximately 500 microns)

- Developing a robust fit-sphere unwrapping algorithm that maps the 3D fingerprint surface onto a standard flat representation while preserving minutiae geometry

- Building a non-contact acquisition booth with controlled illumination that could capture all five fingers simultaneously

- Y. Wang, L. G. Hassebrook, and D. L. Lau, "Data Acquisition and Processing of 3-D Fingerprints," IEEE Transactions on Information Forensics and Security, vol. 5, no. 4, December 2010, pp. 750–760. (122 citations)

- Y. Wang, D. L. Lau, and L. G. Hassebrook, "Fit-Sphere Unwrapping and Performance Analysis of 3-D Fingerprints," Applied Optics, vol. 49, no. 4, 2010.

- Y. Wang, D. L. Lau, and L. G. Hassebrook, "Quality and Matching Performance Analysis of Three-Dimensional Unraveled Fingerprints," Optical Engineering, vol. 49, no. 7, 2010.

Two early Seikowave scanners. The ruggedized unit (right) survives a 1-meter drop onto concrete without recalibration.

Two early Seikowave scanners. The ruggedized unit (right) survives a 1-meter drop onto concrete without recalibration.

Phase-shifting systems require projecting and capturing a minimum of three sequential patterns to recover phase, which limits frame rate. For structured light to be useful in industrial inspection of moving parts, robotic guidance, or real-time human-computer interaction, the entire acquisition-processing-display pipeline must operate at or above video frame rates. We developed the dual-frequency pattern scheme, in which two sets of sinusoidal patterns at different spatial frequencies are interleaved in a carefully designed projection sequence. The lower frequency provides an unambiguous coarse phase estimate that resolves the periodicity ambiguity of the higher-frequency measurement, which in turn provides the fine-resolution depth map. By optimizing the number of patterns, their frequencies, and their phase shifts, we achieved structured light acquisition, processing, and 3D point cloud display at over 150 frames per second — at the time far beyond any published result — using commodity DLP projectors and standard frame grabber hardware. Key publications:

- K. Liu, Y. Wang, D. L. Lau, Q. Hao, and L. G. Hassebrook, "Dual-Frequency Pattern Scheme for High-Speed 3-D Shape Measurement," Optics Express, vol. 18, no. 5, March 2010, pp. 5229–5244. (460+ citations; most downloaded open-access paper in the OSA library for four consecutive months after publication)

- K. Liu, Y. Wang, D. L. Lau, Q. Hao, and L. G. Hassebrook, "Gamma Model and its Analysis for Phase Measuring Profilometry," JOSA A, vol. 27, no. 3, 2010. (250+ citations)

- Y. Wang, K. Liu, Q. Hao, X. Wang, D. L. Lau, and L. G. Hassebrook, "Robust Active Stereo Vision Using Kullback-Leibler Divergence," IEEE Transactions on Pattern Analysis and Machine Intelligence, 2012.

- Y. Wang, K. Liu, Q. Hao, D. L. Lau, and L. G. Hassebrook, "Period Coded Phase Shifting Strategy for Real-Time 3-D Structured Light Illumination," IEEE Transactions on Image Processing, vol. 20, no. 11, 2011. (125 citations)

In parallel with the structured light program, I led a $2M+ program at the University of Kentucky as part of a $10M+ multi-partner effort with M2 Technologies, CABEM Technologies, and Lockheed Martin, funded by the U.S. Marine Corps and later the U.S. Air Force. The program applied mid-infrared (mid-IR) camera technology to detect and track bullets in flight as a method of real-time sniper localization. The core technical challenge was that a supersonic bullet in flight produces a faint but detectable mid-IR signature from aerodynamic heating and bow shock. By deploying an array of calibrated mid-IR cameras with overlapping fields of view, the system could triangulate bullet trajectory in 3D and back-project to the shooter's position within seconds of the first shot. Conducted in three phases from 2004 to 2010, the program produced a portable, ruggedized anti-sniper system capable of detecting bullets in flight at ranges exceeding 200 meters. The final systems were delivered to the U.S. Air Force.

PhD student Ying Yu with the back-lit caltag calibration pattern for structured light scanners.

PhD student Ying Yu with the back-lit caltag calibration pattern for structured light scanners.

As structured light systems matured from laboratory demonstrations to deployed products, the field's attention shifted from raw speed to accuracy and robustness — particularly in challenging scenes with inter-reflections, subsurface scattering, and projector nonlinearity. Projector gamma response causes the projected sinusoidal patterns to be distorted, introducing systematic phase errors. We developed an efficient online nonlinearity calibration algorithm:

- K. Liu, S. Wang, D. L. Lau, et al., "Nonlinearity Calibrating Algorithm for Structured Light Illumination," Optical Engineering Letters, vol. 53, no. 5, 2014.

- Y. Zhang, D. L. Lau, and Y. Yu, "Causes and Corrections for Bimodal Multipath in Structured Light Scanning," CVPR, 2019.

- Y. Zhang and D. L. Lau, "BimodalPS: Causes and Corrections for Bimodal Multi-Path in Phase-Shifting Structured Light Scanners," IEEE Transactions on Pattern Analysis and Machine Intelligence, 2024.

- Y. Zhang, D. L. Lau, and D. Wipf, "Sparse Multi-Path Corrections in Fringe Projection Profilometry," CVPR, 2021.

- K. Liu et al., "Extending Epipolar Geometry for Real-Time Structured Light Illumination," Optics Letters, vol. 45, 2020.

- K. Liu et al., "Extending Epipolar Geometry for Real-Time Structured Light Illumination II: Lossless Accuracy," Optics Letters, vol. 46, 2021.

- K. Liu, S. Ying, D. L. Lau, et al., "Symmetrical Epipolar Features Over Normalized Camera/Projector Calibration Matrices for Real-Time Structured Light Illumination," IEEE Signal Processing Letters, vol. 29, 2022.

- G. Zhang, D. L. Lau, et al., "Correcting Projector Lens Distortion in Real Time with a Scale-Offset Model for Structured Light Illumination," Optics Express, vol. 30, 2022.

- G. Zhang, D. L. Lau, et al., "Circular Fringe Projection Profilometry and 3D Sensitivity Analysis Based on Extended Epipolar Geometry," Optics and Lasers in Engineering, vol. 162, 2023.

- R. Gao, X. Zhao, D. L. Lau, et al., "One-Shot Structured Light Illumination Based on Shearlet Transform," Optics Express, vol. 32, 2024.

A student demonstrates the digital face paint system, projecting Pennywise in real-time registration with facial expressions.

A student demonstrates the digital face paint system, projecting Pennywise in real-time registration with facial expressions.

Spatial augmented reality (SAR) — also called projection mapping or shader lamps — is the complement of head-mounted AR: rather than overlaying digital content on a display worn by the user, SAR projects digital content directly onto physical surfaces, making the surfaces themselves appear to change color, texture, or shape. Unlike a standard video projector, a SAR system must account precisely for the 3D geometry of the projection surface so that the projected image appears geometrically correct from the viewer's perspective. This requires the projector to know the 3D structure of the scene — exactly the information that a structured light scanner provides. The natural convergence of structured light 3D sensing and SAR display is the core idea behind my current research direction: a single device that first scans the scene with structured light to build a precise 3D model, then immediately switches to SAR display mode to project geometrically corrected digital content onto that scene in real time. This enables applications ranging from interactive design visualization and surgical training to museum installations and industrial assembly guidance. My group developed a structured light smart camera architecture that integrates the projector, camera, and processing pipeline into a single compact unit capable of both 3D scanning and SAR display. M.S. student Matthew Ruffner completed his thesis on the machine vision camera design in 2018, and Ph.D. student Ying Yu completed the first full dissertation on this topic in 2019. A proof-of-concept SAR system for augmented reality intubation training for first responders was developed with internal seed funding from the University of Kentucky Vice President for Research and College Deans Igniting Research Collaborations (IRC) program ($36K, 2020–2021). This project demonstrated that a structured light SAR system could register digital anatomical overlays onto a physical training mannequin in real time, providing visual guidance to first responders learning emergency airway management — a compelling early application of the technology to clinical training. An interdisciplinary extension of this SAR work is the Digital Skin project — a collaboration with artist Siavash Tohidi from UK's School of Art and Visual Studies and Dr. Michael Winkler from the Department of Radiology. The system uses an Intel RealSense depth camera with OpenCV-based face detection to track a subject's facial features in real time, then projects digital masks and textures onto the face using a smart laser projector with built-in eye-safety features. The project explores the interchangeability of digital and physical identity, and demonstrates how structured light SAR technology can bridge engineering, art, and medicine. Key conference publications from this phase:

- M. P. Ruffner, Y. Yu, and D. L. Lau, "Structured Light Smart Camera for Spatial Augmented Reality Applications," Proceedings of SPIE, Emerging Digital Micromirror Device Based Systems and Applications XI, February 2019.

- Y. Yu, D. L. Lau, and M. P. Ruffner, "3D Scanning by Means of Dual-Projector Structured Light Illumination," Proceedings of SPIE, February 2019.

This research thrust is protected by eleven U.S. patents covering structured light pattern design, real-time 3D reconstruction, projector-camera calibration, non-contact fingerprint scanning, and SAR display systems, including:

| Patent Number | Title | Year |

|---|---|---|

| 7,440,590 | System and Technique for Retrieving Depth Information about a Surface by Projecting a Composite Image of Modulated Light Patterns (186 citations) | 2008 |

| 8,224,064 | System and Method for 3D Imaging Using Structured Light Illumination | 2012 |

| 8,947,677 | Dual-Frequency Phase Multiplexing and Period Coded Phase Measuring Pattern Strategies in 3-D Structured Light Systems | 2015 |

| 8,976,367 | Structured Light 3-D Measurement Module and System for Illuminating a Subject-Under-Test in Relative Linear Motion with a Fixed-Pattern Optic | 2015 |

| 9,444,981 | Portable Structured Light Measurement Module with Pattern Shifting Device Incorporating a Fixed-Pattern Optic (121 citations) | 2016 |

| Sponsor | Program | Amount | Period |

|---|---|---|---|

| NASA | STTR Phase I — Real-Time Range Sensing Camera | $100K | 2004–2005 |

| Natl. Institutes for Hometown Security | 3D Finger and Palm Print Scanner (NIJ Fast Fingerprint) | $988K | 2009–2010 |

| Natl. Institutes for Hometown Security | Active and Passive Range Sensor Fusion for Surveillance | $650K | 2004–2006 |

| U.S. Marine Corps / U.S. Air Force | Anti-Sniper Infrared Targeting System, Phases I–III | $2M+ (UK share) | 2004–2010 |

| Toyota | Large Area Structured-Light Illumination Scanner | $200K | 2005–2006 |

| Michigan Aerospace / NASA SBIR | High Performance 3D Surface Scanning | $50K | 2006 |

| Gentle Giant Studios | Facial Expression 3D Scanner | $60K | 2006–2007 |

| UK IRC (internal) | AI-Powered AR Intubation Training for First Responders | $36K | 2020–2021 |

| Student | Degree | Institution | Year | Current Position |

|---|---|---|---|---|

| Wei Su | Ph.D. | University of Kentucky | 2006 | — |

| Abhishika Fatehpuria | M.S. | University of Kentucky | 2006 | Biomet Orthopaedic Advantage |

| Andrew Tan | M.S. | University of Kentucky | 2006 | — |

| Yongchang Wang | M.S., Ph.D. | University of Kentucky | 2008, 2010 | HTG Capital Partners |

| Kai Liu | Ph.D. | University of Kentucky | 2010 | Sichuan University |

| Eric Dedrick | Ph.D. | University of Kentucky | 2011 | — |

| Sen Li | M.S. | University of Kentucky | 2016 | CHEO Research Institute |

| Matthew Ruffner | M.S. | University of Kentucky | 2018 | — |

| Ying Yu | Ph.D. | University of Kentucky | 2019 | ASML |